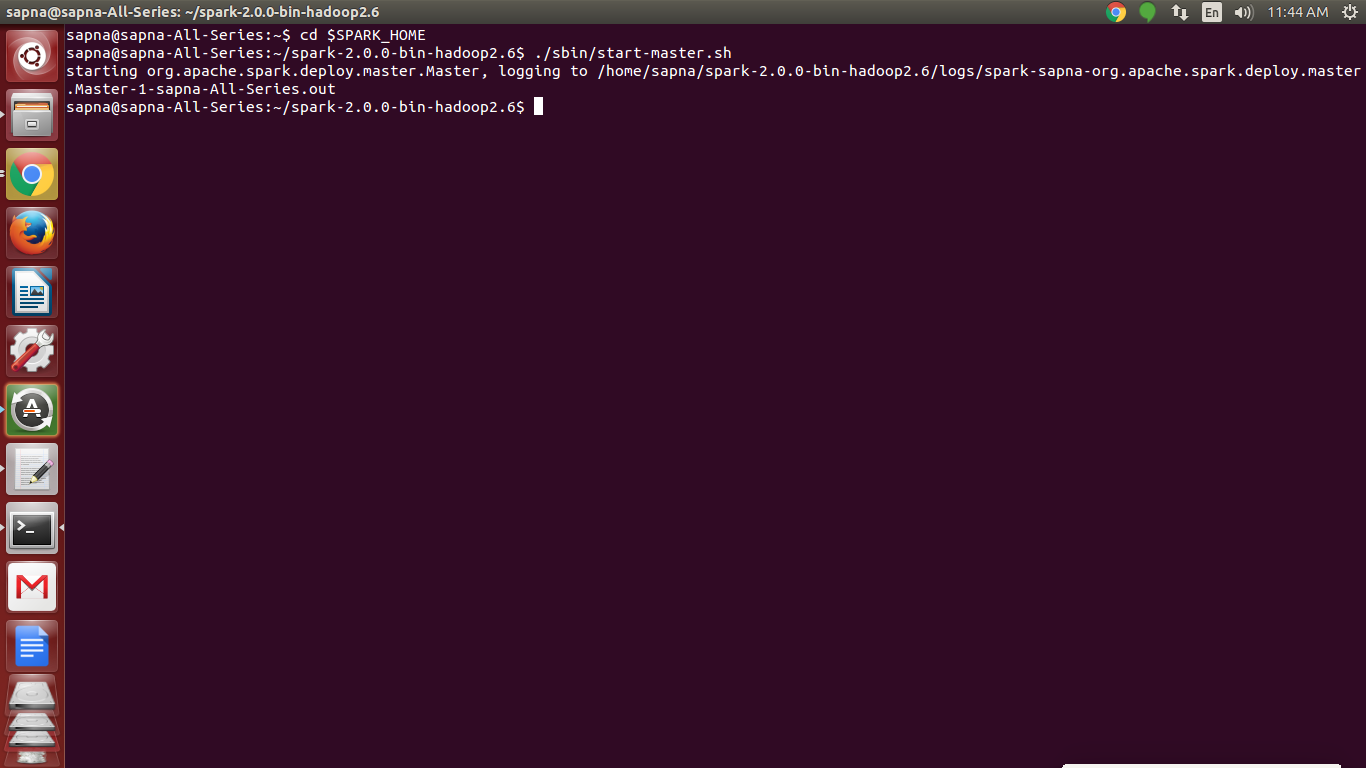

Sudo chown spark:spark /usr/apache/spark-2.2.1-bin-hadoop2.7 Sudo tar -xvzf spark-2.2.1-bin-hadoop2.7.tgz Create user sparkĭefine password, for the sake of testing, let’s go with spark Prepare directory for Spark home Running PySpark now starts PySpark CLI with Python 3.5.2Īnd yes, running python or python3 will both execute the same action – start python 3.5. Sudo ln -s /usr/bin/python3 /usr/bin/python Otherwise, the latter alternative is the option. The first alternative is acceptable only if Python packages that are in Python2 but not Python3 are going to be used.

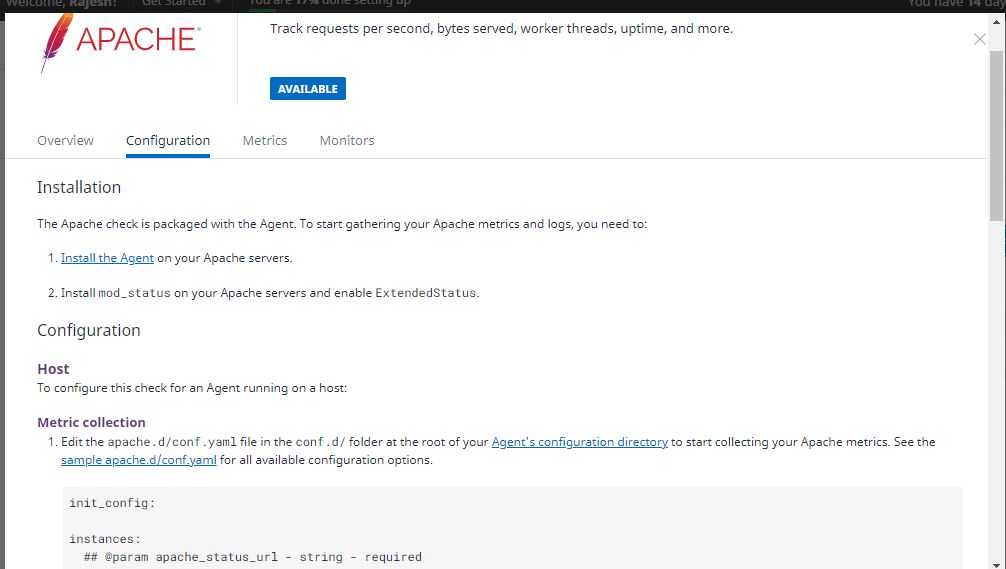

#Install apache spark on redhat without sudo install

Options are two: install Python 2.7+ or create a link to Python3. usr/apache/spark-2.2.1-bin-hadoop2.7/bin/pyspark: line 45: python: command not found When running PySpark without Python 2.7+, the following error message outputs Ubuntu 16.04 on AWS comes only with Python 3.5. When running PySpark, Spark looks for Python in /usr/bin directory. Sudo add-apt-repository ppa:openjdk-r/ppaĪnd add the following line to the top of the fileĮxport JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64ĭoublecheck if this is the correct Java home. If not sooner you will need Java for running History server.

Go into the hostname file and change the name to sparkĬhange localhost with instance name in hosts fileĪfter the 127.0.0.1 write the name of the instance

MobaXterm is my choice of interface to SSH to the instance. Im using an AWS t2.micro instance with Ubuntu 16.04 on it. Update : Automated Apache Spark install with Docker, Terraform and Ansible? Check out this post. I have installed older Apache Spark versions and now the time is right to install Spark 2.2.1.